Agent-First Development: Designing Intelligence Before Interfaces

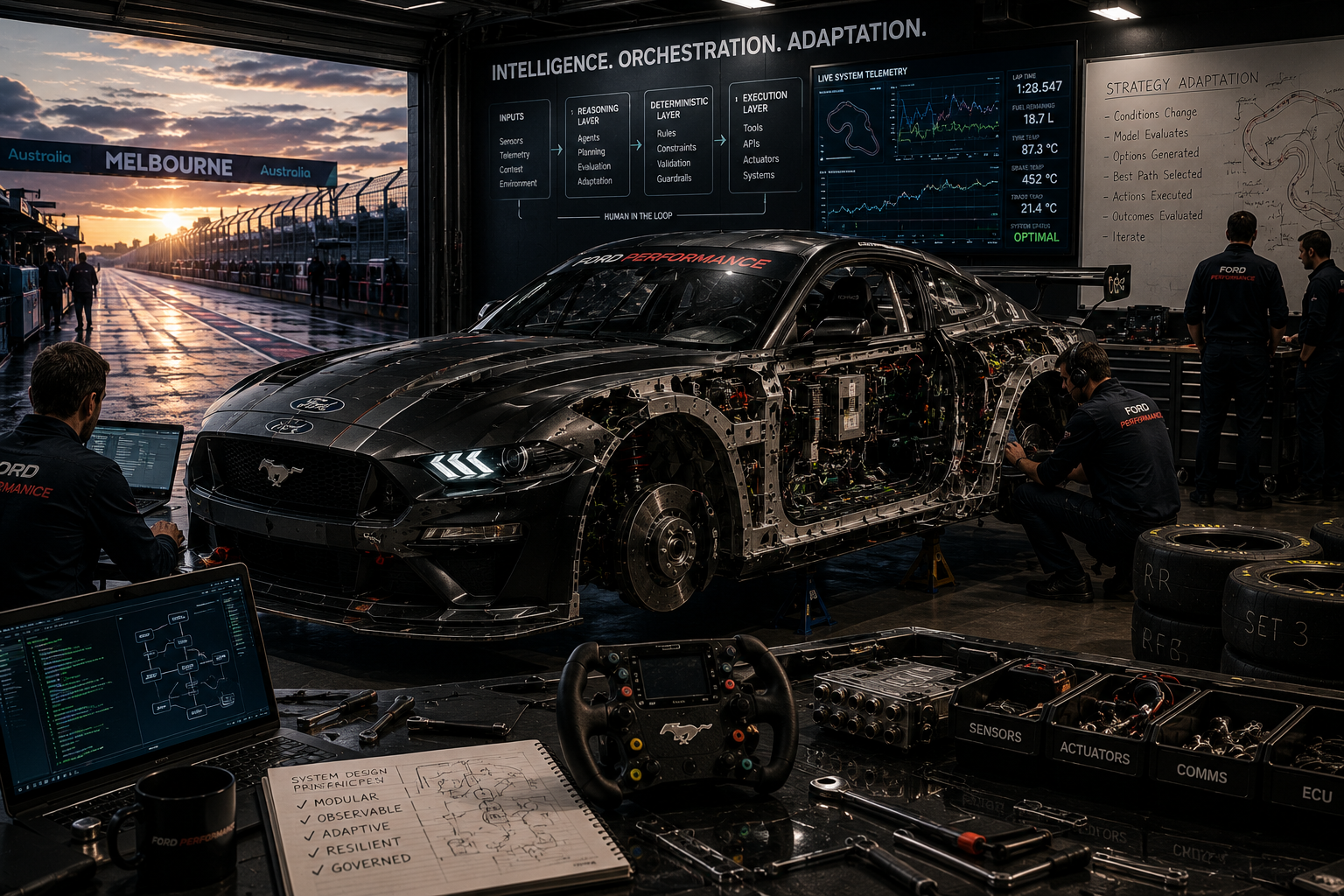

Agent-first development inverts the traditional build order - designing and validating how agents reason, retrieve context, and produce outcomes before any user interface or deep integration is introduced. Here's why that matters.

For over three decades, software architecture has been optimised for deterministic execution. Interfaces guided users through predefined workflows, business logic was encoded explicitly in code, and predictability was the primary design objective.

The emergence of generative AI, large language models and agentic solutions introduces non-deterministic reasoning into mainstream technology. Solutions can now interpret ambiguity, retrieve context dynamically, and adapt at runtime. This capability shifts where architectural complexity resides.

In agentic solutions, the hardest problems do not sit in the interface layer. They emerge in orchestration, evaluation, governance, tool boundaries, and reasoning. The mistake many teams make is simply embedding intelligence into interfaces rather than architecting it as a capability.

Agent-first development is a methodological inversion of traditional build order. It prioritises designing and validating intelligence before constructing user interfaces, integration layers, and storage. Rather than treating agents as features embedded within applications, it treats reasoning as a first-class architectural concern.

The Historical Model: Deterministic Software

Before the emergence of generative AI, most software systems were built on a fundamentally deterministic model. Business logic was encoded explicitly in code. Data was exchanged through rigid, formally defined contracts. User interfaces guided people through carefully designed, linear journeys. For a given input, the system was expected to produce a known and predictable output, every time.

This model worked well because it was controllable, testable, and repeatable. Developers could reason about behaviour, write test cases for known scenarios, and deploy software with confidence that it would behave exactly as designed.

Even where machine learning entered the picture - sentiment analysis, image recognition, entity extraction, topic classification - the outputs were still largely deterministic in practice. A model might return a probability score, but the system's response to that score was fixed.

A useful way to think about traditional software is like following a recipe. If you put the same ingredients into the bowl, in the same quantities, and follow the same steps, you expect the same cake every time. The process is reliable precisely because nothing is left to interpretation.

The challenge with deterministic software wasn't what it could do - it was what it couldn't anticipate. Every possible scenario had to be known in advance, explicitly designed for, coded, tested, and deployed. The familiar pattern emerged everywhere: If this happens, then do that. Else if this other thing happens, do something else. Else, fail. This worked reasonably well in tightly bounded domains.

But real-world human workflows are rarely that clean. Humans are exceptional at handling ambiguity. Information is incomplete, inputs are messy, context matters, exceptions are common. And critically, humans improvise.

In practice, many business processes relied on people to absorb the complexity before it ever reached the software. Don't have sugar in the cupboard? No problem, we'll substitute honey. The system never had to know about the substitution, because a human made the judgment call upstream. This led to an important but often unspoken reality of traditional systems: humans became the error handlers.

This doesn't suggest that deterministic software is irrelevant today. It remains essential, particularly in domains where predictability, repeatability, and strict control are required. Non-deterministic workflows are powerful, but they are not a suitable fit for every problem.

The Shift to Non-Deterministic Agentic Solutions

With the rise of large language models, software systems have taken a meaningful step closer to how humans actually work. Computers struggled with ambiguity, nuance, and improvisation - areas where people naturally excel. LLMs have dramatically improved our ability to handle these grey areas, particularly when dealing with unstructured inputs such as natural language, incomplete information, or evolving requirements.

Modern models bring broad general knowledge by default, and this can be extended or contextualised through techniques such as fine-tuning, Retrieval-Augmented Generation (RAG), and agent-to-agent interaction patterns. Rather than being limited to rigid inputs and outputs, these systems can reason over context, draw from external knowledge sources, and adapt their behaviour based on the situation at hand.

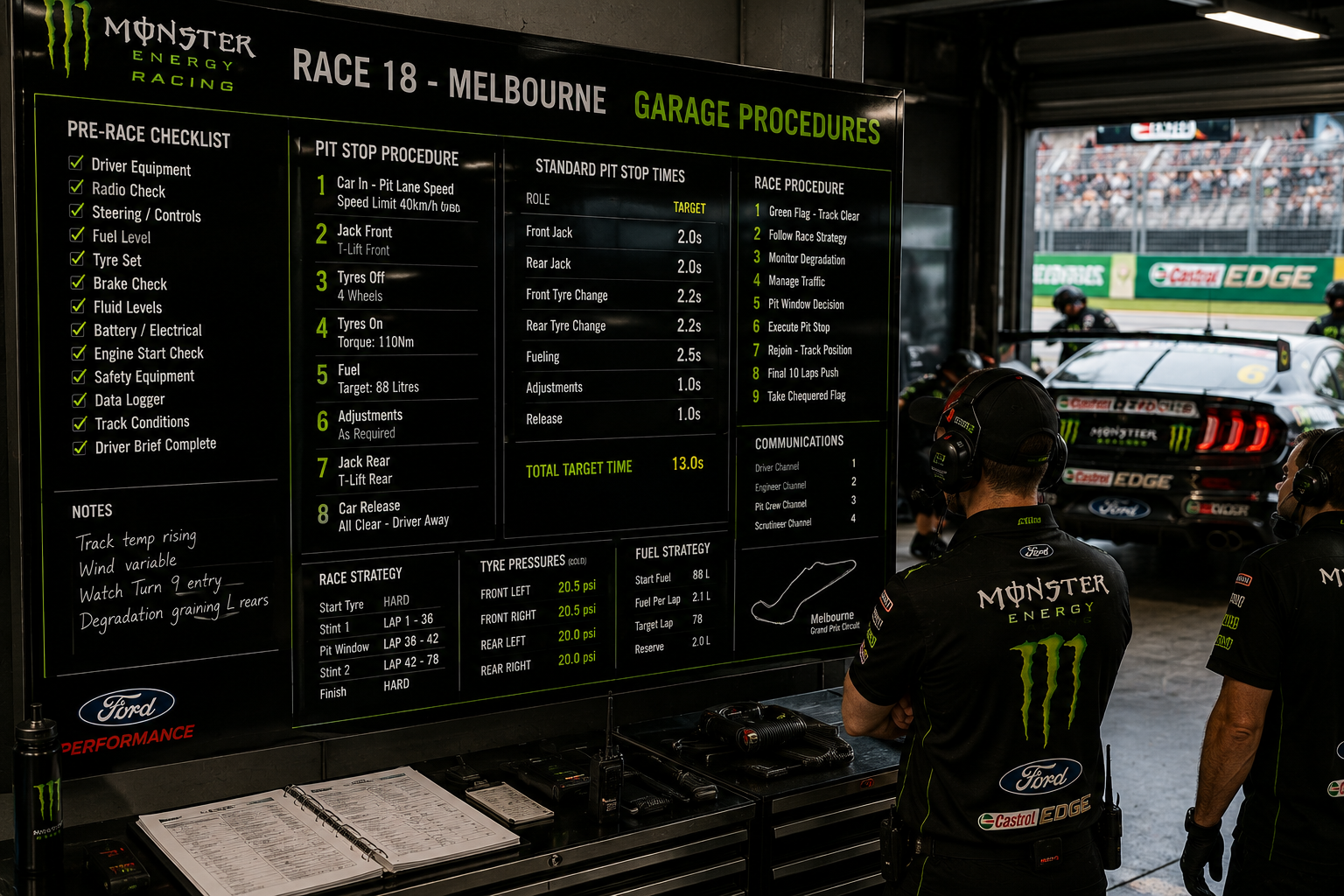

Importantly, these capabilities do not replace deterministic workflows. Instead, non-deterministic components are increasingly embedded within otherwise well-defined processes An AI agent may be invoked at a specific point in a workflow, but how it performs its task can vary depending on the context, the data it retrieves, and the intent it infers.

Where AI agents really excel is when we totally re-think the way work is delegated and executed. For example, a finance team could use agents to reconcile invoices, bank transactions, and expenses every day so that by month-end most mismatches and missing documents are already flagged and resolved. The humans then only need to review exceptions and approve the final close instead of doing all the work in one monthly rush. The process is no longer a rigid flow; it becomes a dynamic orchestration of tasks that can adapt in real time.

At their core, most AI agents within these solutions consist of three primary elements:

- a model

- a set of instructions that function much like a job description

- a piece of work to perform

This mirrors how we delegate work to people. And just like people, agents can be influenced. If the instructions are vague, conflicting, or poorly constrained, an agent may behave in unintended ways. Given the right combination of prompts or inputs, it can be persuaded to step outside its intended role. This is why guardrails and defensive design are essential. Prompt design alone is insufficient. Systems must be built with clear boundaries, input validation, output controls, and security protections that assume the agent will be challenged, intentionally or otherwise.

There are also clear limits to what LLMs are good at. While they excel at language, reasoning, and synthesis, they are not well suited to tasks requiring precise calculation, direct system interaction, or deterministic execution. This is where tools become critical. Code interpreters can generate code on the fly to solve mathematical problems, Model Context Protocols (MCPs) and external APIs connect to different systems and platforms, and RAG pipelines allow agents to connect with systems that hold your knowledge. Rather than trying to do everything themselves, agents can and should orchestrate other agents and capabilities across your broader environment.

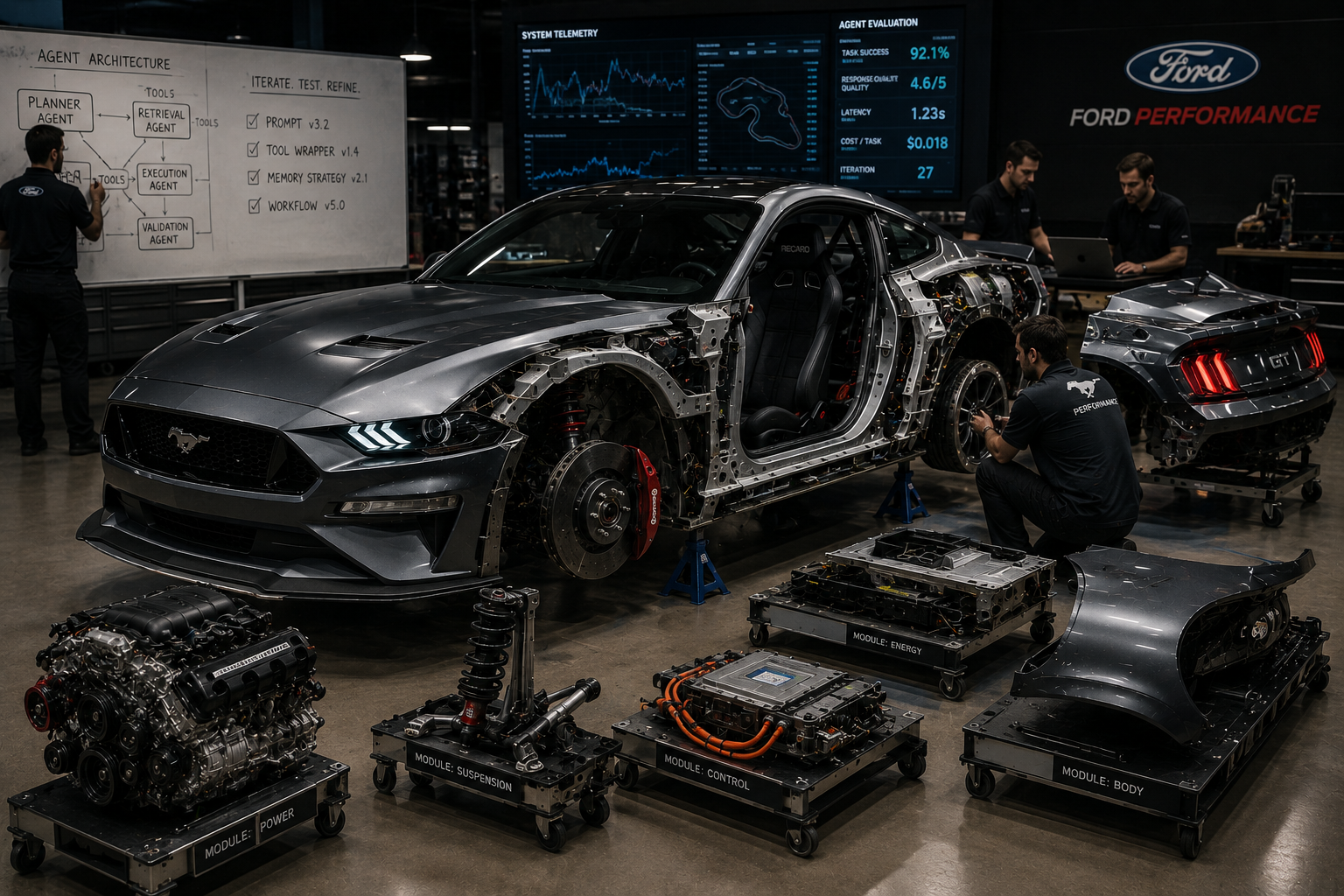

As these systems grow in complexity, architectural patterns matter. Single, monolithic agents quickly become fragile and difficult to reason about. A more effective approach mirrors principles we already understand from distributed systems and microservices: teams of specialised agents, each responsible for a discrete task, coordinated by an orchestrator. This separation of responsibilities improves reliability, traceability, security, and quality.

Equally important is how quality assurance is handled. Allowing agents to review their own work often leads to blind spots, much like humans reviewing their own work. Better outcomes are achieved by introducing separate review agents explicitly tasked with validation, challenge, or refinement. In more advanced patterns, multiple agents using different models or personas perform the same task independently and converge on a consensus. This diversity of perspective significantly improves robustness and reduces the risk of subtle errors.

Defining Agent-First Development

Rather than starting with screens, forms, and user journeys, agent-first development asks developers to focus first on the non-deterministic and cognitive capabilities of the system. The goal is to design and validate how an agent reasons, retrieves context, applies constraints, and produces outcomes - before any user interface is introduced.

At its core, agent-first development is about defining and creating the workflow and behaviour of the agent independently of how a human might eventually interact with it. In its simplest form, an agent:

- receives an input

- interprets the request

- generates an output

It doesn't matter if you have a single agent or 100 - they all function in this same way. It's how you chain them together which matters.

Once we've understood the process we need to create and the job descriptions each agent in the process will adopt, we can then begin to prove value by validating:

- Are we providing the right inputs to each agent?

- With the right inputs, does each agent produce a high-quality output?

- Do we have the right knowledge sources and tools available?

- Are the agents starting to meet the pre-defined success measures?

In practice, the real challenge is refining agent instructions so outputs consistently meet the required quality, accuracy, and level of determinism. This becomes even more difficult as guardrails for safety, security, compliance, and misuse prevention are layered in.

This leads to an important principle: do not over-scope your prototypes in the first instance. The goal of early agent development is to prove business value, not to achieve technical perfection. Start with narrowly defined capabilities, validate that they deliver meaningful outcomes, and only then iterate toward scale and technical excellence. Attempting to solve every edge case upfront often slows progress and obscures whether the agent is valuable at all.

A critical but often overlooked aspect of agent-first development is the ability for an agent to pause, clarify, and seek additional input through a human-in-the-loop. This isn't just a step in the development process - it's a fundamental element of a best-practice agentic workflow that mirrors how humans work. When faced with incomplete, ambiguous, or conflicting information, people ask follow-up questions before proceeding. Expecting an agent to operate differently leads to brittle systems that either hallucinate confidence or fail silently.

Well-designed agents should be allowed to acknowledge uncertainty and request clarification at appropriate points in the workflow. This not only improves output quality and accuracy but also builds trust with users by making the agent's reasoning and limitations explicit.

Agent-first development embraces iteration by design. Value first. Refinement second.

Deterministic Services as Capability Providers

Non-deterministic capabilities do not replace deterministic workflows. Instead, they sit alongside them - each applied where they are most effective, similar to how humans form the intelligence layer in workflows today.

There are entire classes of problems where determinism is not just preferable, but essential. Repetitive mathematical calculations, financial reconciliations, scheduling, enforcement of business rules, and transactional interactions with external systems all require consistency. In these cases, given the same inputs, the system must produce the same outputs every time. Variability is not a feature; it is a defect.

For this reason, agentic solutions do not attempt to make agents responsible for everything. Instead, they rely on a clear separation of concerns between reasoning and execution. Broadly, deterministic capabilities are integrated in two primary ways.

The first approach is agents leveraging tools. In this model, agents act as decision-makers and orchestrators, but delegate specific tasks to deterministic tools. These tools may include calculation engines, code interpreters, APIs, policy evaluators, or legacy systems. The agent determines when a task should be performed and why, while the tool ensures it is performed correctly and consistently. This allows agents to reason in flexible, human-like ways without sacrificing precision where it matters.

The second approach is agents operating as components within a broader deterministic workflow. Here, the overall process remains structured and predictable, but individual steps are augmented with non-deterministic reasoning. An agent might be invoked to interpret unstructured input, summarise information, classify intent, or generate content - before handing control back to the deterministic workflow. This pattern is particularly effective in enterprise environments where auditability and control are required, but human-like judgment is still valuable at specific decision points.

When using an agent-first development methodology, the interface between non-deterministic reasoning and deterministic execution becomes one of the most critical parts of the system to design, test, and validate. This boundary is where intent is translated into action, where uncertainty is resolved into decisions, and where mistakes are most likely to have real-world consequences.

By designing agents first, teams are forced to explicitly define how non-deterministic reasoning interacts with deterministic capabilities, including:

- Tools invoked by agents leveraging reliable execution for well-defined tasks

- Data providers retrieving authoritative and up-to-date information

- Execution services responsible for performing actions in the real world

Validating these interactions early allows teams to answer fundamental questions long before UI concerns enter the picture: what decisions should the agent be allowed to make, where must control be enforced, and how does the system behave when confidence is low or information is incomplete?

This architectural separation allows systems to embrace non-determinism where judgment and interpretation are required, while preserving determinism where accuracy, reliability, and control are non-negotiable. Most importantly, it ensures these boundaries are designed intentionally - rather than being retrofitted after interfaces and workflows have already locked in incorrect assumptions.

The New Sources of Complexity

Building agentic solutions introduces a new layer of engineering complexity. Some of these challenges will feel familiar to teams who have operated cloud-scale systems, but they take on a different character in the presence of non-deterministic reasoning.

Cost control is no longer limited to infrastructure and API traffic. Each user interaction may trigger multiple model calls, retrieval queries, tool executions, and review loops. Poor orchestration design can silently multiply compute usage. In agentic solutions, cost becomes a function of reasoning depth and behavioural design, not just request volume.

Quota governance similarly shifts in importance. Model providers enforce rate limits and token caps, and multi-agent orchestration patterns can consume quotas in bursts. Systems must be designed to handle throttling gracefully, queue intelligently, or fall back to alternative models.

Observability must extend beyond logs and metrics. It is no longer sufficient to know that a request succeeded or failed. Teams need visibility into reasoning chains, tool usage, intermediate decisions, and confidence levels. Without insight into how conclusions were reached, debugging, governance, and trust become fragile.

Data quality remains foundational, but the impact is amplified. Retrieval layers, memory stores, and knowledge bases influence not just outputs, but reasoning pathways. Poor or outdated data does not simply return incorrect values - it subtly distorts judgment and can even compromise agents.

Alongside these evolved concerns, agentic solutions introduce entirely new challenges.

Unlike diamonds, models are not forever. They evolve rapidly. New versions are released, older versions are retired, and competing vendors introduce models optimised for different tasks. Model selection becomes an ongoing architectural decision rather than a one-time dependency. Systems must be designed with abstraction and adaptability in mind.

Evaluation becomes a continuous discipline. In deterministic systems, correctness is often binary. In agentic solutions, outputs are probabilistic and contextual. As models are upgraded or data changes, responses must be re-baselined against defined success measures. Quality regression is rarely obvious; it requires structured evaluation frameworks.

Safety is paramount. While cloud providers offer moderation and filtering capabilities, these guardrails must be explicitly configured and tested. Flexibility without constraint increases risk. Safety is not a feature toggle; it is an architectural responsibility.

Security introduces a fundamentally new dynamic. AI agents interpret language and can be manipulated through adversarial inputs or prompt injection. Malicious actors may attempt to override instructions, extract sensitive data, or redirect behaviour. Defensive design must assume the agent will be challenged. Boundaries, tool isolation, and strict validation are foundational, not optional.

At scale, these are not optional. They are essential differentiators between a prototype and a production-ready solution. These concerns reinforce a central principle: the intelligence layer must be designed deliberately before interfaces are constructed. These concerns cannot be ignored, but they also should not prevent early experimentation.

Agent-first development separates validation from hardening. First prove the intelligence is valuable, then engineer it to be resilient, governed, and secure.

Designing for Reasoning, Not Demonstration

Teams that treat agents as features embedded within user interfaces often make predictable mistakes.

First, they assume a user interface is required for operation. Many agentic solutions do not require UIs at all. Agents may operate as background workers, orchestrators, integration layers, or API-driven services. Constraining the processes in agentic solutions to predefined screens and flows can limit their flexibility and prematurely hard-code assumptions about how work must be performed.

Second, UI-first approaches tend to optimise for demonstration rather than durability. When agents are embedded into an existing interface, the focus often shifts toward showing visible value quickly. Prompts are tuned to make the demo compelling. Edge cases are hidden. Architectural trade-offs are deferred. What emerges may look impressive, but the underlying reasoning layer remains fragile.

Third, starting with the interface obscures how a process should be decomposed. Agentic solutions often require breaking work into smaller, specialised capabilities: retrieval, planning, execution, validation, refinement. Designing around screens encourages teams to think in terms of pages and forms rather than responsibilities and reasoning boundaries.

Agent-first development surfaces uncertainty early. It forces teams to confront fundamental questions:

- Where does non-deterministic reasoning belong?

- Where must deterministic enforcement take over?

- What decisions can an agent make autonomously?

- What requires human oversight?

These are architectural decisions, not interface decisions.

Prompt engineering, while useful, is a local optimisation. It improves behaviour within a given boundary. Agent-first development is a systems design and build discipline.

By designing and building intelligence before interfaces, teams build systems that are adaptable, governable, and resilient. Interfaces can evolve. Prompts can be refined. Models can be replaced. But architectural boundaries, once embedded into workflows and user experiences, are significantly harder to unwind.

Agent-first development prevents intelligence from being treated as decoration. It establishes reasoning as the foundation of the system, not an enhancement layered on top.

Agentic Software Development as an Enabler

Agent-first development changes what we prioritise. Agentic software development changes how quickly we can execute on those priorities.

One of the most immediate and tangible benefits of agentic software development is not architectural sophistication, but relief. Modern software development involves a significant amount of necessary but repetitive work: writing boilerplate code, scaffolding APIs, wiring integrations, creating test harnesses, drafting documentation, and implementing standard patterns. While essential, these activities do not meaningfully advance the reasoning architecture at the heart of an agent-first system.

When building agent-first systems, the most valuable early work involves:

- Refining and iterating on prompts and instruction sets

- Breaking down complex workflows into specialised agents

- Designing tool wrappers, contracts, and execution boundaries

These activities require rapid iteration. Prompts need to be tested and refined. Tools need to be scaffolded and adjusted. Agentic software development dramatically reduces the friction of this experimentation. Developers can refine prompts and generate tool wrappers in minutes rather than days.

This acceleration is particularly important because agent-first development deliberately postpones UI concerns. By focusing first on reasoning and orchestration, teams must be able to iterate quickly at the intelligence layer. Without AI-assisted development, this level of experimentation would be prohibitively slow.

Additionally, not all agentic solutions require deterministic user interfaces. Some operate entirely through conversational interfaces or system-to-system integrations. Where deterministic UIs are required, however, agentic software development again acts as an accelerator. Once the intelligence layer is validated, UI scaffolding, form generation, and workflow visualisation can be produced rapidly without distracting from core architectural decisions.

As AI tools absorb mechanical implementation tasks, developers spend more time:

- Shaping system intent

- Defining boundaries between non-deterministic reasoning and deterministic enforcement

- Designing guardrails and policy layers

- Reviewing outputs and validating behaviour

- Planning for resilience as models evolve

Developers move from primary implementers to stewards of intelligent systems.

- Agent-first development defines what must be built first.

- Agentic software development makes it feasible to build it quickly and responsibly.

Together, they enable a disciplined but accelerated path from experimentation to production.

Conclusion

Traditional software architecture optimised for control. Predictable inputs, predictable outputs, predefined paths. Agentic solutions optimise for reasoning under uncertainty.

This inversion changes where complexity lives. Intelligence is no longer an enhancement layered onto workflows. It becomes the foundation upon which workflows are constructed.

Designing intelligence first is not a stylistic preference. It is a structural requirement for building systems that combine non-deterministic reasoning with deterministic execution safely and responsibly.

Interfaces will continue to matter. Deterministic services will remain essential. Governance, safety, and security will only grow in importance. But the architectural centre of gravity has shifted.

Agent-first development recognises this shift. It separates validation from hardening, reasoning from execution, experimentation from production. It forces explicit decisions about autonomy, boundaries, and control before user experiences are locked in.

The organisations that internalise this inversion will not simply build better demos. They will build systems that adapt, evolve, and remain governable as models change.

In an era where intelligence can be embedded almost anywhere, the discipline with which it is architected will determine whether it becomes foundational - or fragile.